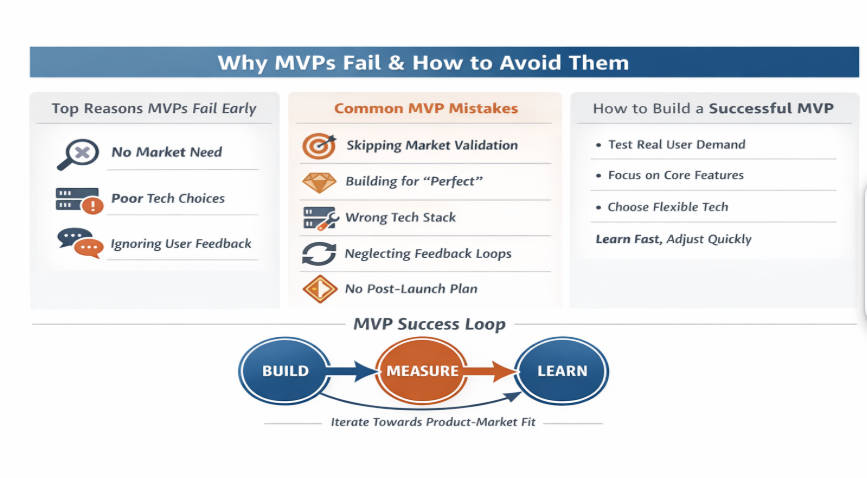

Mistake #3: Poorly Defined Goals & KPIs

Why MVPs Fail Quietly Here

Many MVPs don’t fail outright — they drift. Without clear success criteria, teams interpret every outcome as progress, even when learning is minimal.

This creates a dangerous illusion of momentum.

What Good MVP Goals Actually Look Like

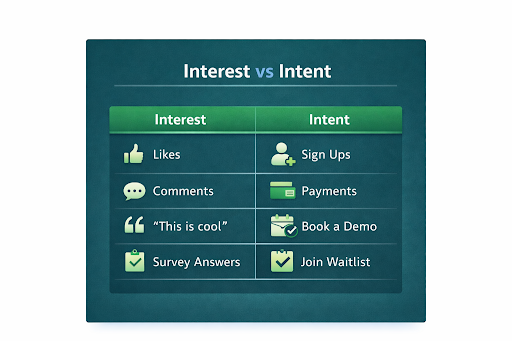

Strong MVP goals are:

- Behavior-based, not opinion-based

- Binary or directional, not vague

- Tied to a core assumption

For example, instead of setting a vague goal like “increase engagement,” define a concrete outcome such as “at least 30% of users complete the core action twice within their first seven days.”

Metrics That Matter at MVP Stage

Founders often track too much or the wrong things. At MVP stage, focus on:

- Activation (did users reach value?)

- Retention (did they come back?)

- Conversion (did they take the intended action?)

Founder insight: If you can’t explain your MVP’s success metric in one sentence, it’s too complex.

Mistake #4: Cramming Too Many Features

Scope creep is a classic pitfall. An MVP overloaded with features dilutes your ability to test the core problem.

Why This Happens

Founders often start building too many features because they haven’t prioritized around a single hypothesis. Each extra feature:

- Slows development

- Obscures learning outcomes

- Increases technical complexity

Minimal doesn’t mean half-baked — it means prioritized around one learning objective.

Mistake #5: Ignoring Tech Stack Fit

Short-Term vs Long-Term Tradeoffs

One of the most technical yet crucial decisions in MVP development is choosing the right technology stack. The wrong choice can:

- Slow iteration

- Increase costs

- Lock you into maintenance overhead

- Limit hiring options

Many teams pick tech because it’s “trendy,” not because it aligns with their scope, timeline, and growth predictability — another common MVP development mistake founders make.

The Balance: Learnability vs Scalability

For MVPs, ideal tech selections prioritize:

- Quick testing and deployment

- Low cognitive load for developers

- Ease of integration with analytics and feedback tools

- Escapability (ability to switch later without huge rewrites)

The right MVP stack prioritizes learnability first, scalability second, with the ability to evolve without major rewrites.

Mistake #6: Weak Feedback Loops and No Post-Launch Plan

Many MVPs fail because feedback is sporadic and post-launch decisions are reactive. Without structured feedback loops and a clear plan, teams end up chasing noise instead of learning. Effective MVPs embed feedback into the product, capturing input at meaningful moments and combining quantitative data (what users do) with qualitative insights (why they do it). Defining success signals upfront—such as activation, retention, or engagement depth—and mapping them to clear decisions to iterate, pivot, or pause prevents premature or misaligned changes.

Juicero illustrates this principle vividly. The company raised $120 million for a Wi-Fi-connected juicer using proprietary packs, assuming users would adopt the device as designed. Post-launch, it became clear that the packs could be hand-squeezed with nearly the same result. With no validation strategy or post-launch plan to interpret and act on feedback, Juicero was unable to adjust its approach and shut down just 16 months later (CNBC).

Founder takeaway: Whether building hardware or software, MVPs must pair structured feedback loops with evidence-driven post-launch decisions. Skipping either can turn even the most hyped product into a costly lesson, emphasizing that learning and adaptability are as critical as execution.

Mistake #7: Building Without Scalable Architecture

A common misconception is that scalable architecture requires complex systems, enterprise tooling, or overengineering. In reality, it means designing for change. Many teams ignore this early, only to face costly rewrites once traction appears—one of the most expensive MVP mistakes before product-market fit.

Electroloom’s 3D clothing printer MVP illustrates this challenge. The startup’s prototype couldn’t scale production, forcing rushed rewrites amid funding shortages and misaligned user targeting. Early investment in modular design would have allowed pivots without a full rebuild, showing how “just-enough” architecture protects focus while enabling adaptation.

What “Just Enough” Architecture Looks Like at the MVP Stage

- Components are modular

- Core logic is isolated

- Dependencies are replaceable

Benefits of This Approach

- Faster pivots

- Safer experimentation

- Lower long-term cost

Founder insight: If changing one feature requires touching everything else, the MVP is fragile. Building with modularity and adaptability in mind ensures that the product can evolve based on real feedback without derailing resources or momentum.

Mistake #8: Misaligned Stakeholder Expectations

Success in MVP execution requires alignment across:

- Vision (what are we testing?)

- Scope (what gets built now vs later?)

- Metrics (what counts as evidence?)

- Timing (when do we decide pivot vs persevere?)

When teams lack alignment, development slows, priorities shift mid-sprint, and meaningful insights are lost in day-to-day churn. Clear documentation, shared objectives, and regular check-ins reduce ambiguity and keep execution focused on real learning.

Mistake #9: Ignoring Data & Analytics Setup

The Visibility Trap

Many MVPs ship with little to no instrumentation, forcing founders to rely on intuition instead of evidence. Without visibility into how users actually move through the product, teams end up iterating in the dark and burning valuable time.

From the start, your MVP should capture signals that reflect real user behavior, including funnel flows, where users drop off, how often core tasks are completed, and when feedback is triggered inside the product. These aren’t vanity metrics; they are decision inputs.

Building analytics into the product from day one enables evidence-based iteration. More importantly, it shortens the learning loop that drives product-market fit, helping teams understand not just what users say, but what they actually do.

Mistake #10: Forgetting Security & Compliance Early

Speed is essential at the MVP stage, but overlooking security and compliance early often creates hidden drag on growth. Even early users expect basic protections around their data, and a single lapse can erode trust before your product has a chance to prove value.

More importantly, early security thinking directly shapes MVP execution decisions, including:

- Authentication and access control

Overly broad access may speed development initially, but it increases risk and complicates future role separation. - Data handling and storage choices

Decisions around encryption, backups, and data exposure affect how safely you can iterate with real user data. - Cloud configuration and permissions

Poorly scoped permissions and default settings are among the most common sources of early-stage breaches. - Regulatory readiness by user geography

Even MVPs may need to respect basic consent, retention, or privacy expectations depending on where users are located.

Addressing these areas early doesn’t mean chasing certifications or overengineering. It means making conscious, minimal decisions that protect user data and keep your MVP flexible. When security is built into the foundation, later compliance becomes a straightforward extension rather than a disruptive rebuild.

Mistake #11: Treating the MVP as a One-Off Project

An MVP is not a box to check—it’s the start of an ongoing learning system. Teams that treat the MVP as a one-time build often stall after launch, unsure what to prioritize or how to interpret early signals.

High-performing teams treat MVPs as structured experiments. They launch with intent and connect outcomes directly to decisions through:

- Explicit hypotheses

Clear assumptions about user problems or behaviors the MVP is meant to validate. - Defined decision signals

Metrics or behaviors that indicate whether to iterate, pivot, or pause. - Short learning loops

Fast cycles that turn real usage data into concrete product changes. - Planned iteration triggers

Predefined conditions that guide the next sprint instead of reactive feature changes.

The value of an MVP emerges after launch, not at it. Teams that operationalize learning move faster with less confusion, while those treating MVPs as mini-products often lose momentum to indecision and internal debate.

Conclusion: Build to Learn, Not Just to Launch

The most common MVP mistakes aren’t about execution detail — they’re about mindset. If your MVP is built for validation, not perfection, you reduce risk, lower cost, and accelerate discovery. Avoiding these mistakes and integrating structured feedback, clear goals, and flexible architecture empowers teams to reach product-market fit faster and with confidence.

If you are building an MVP and want clarity around validation, decision-making, and technical foundations reach out to Splitbit Innovative Solutions to design learning-first MVPs that scale without rework. From validation strategy to architecture and iteration planning, we help teams avoid building the wrong product twice.